The Thermal Wall AI Has Already Hit

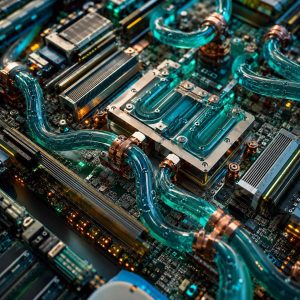

For years, data-center operators treated liquid cooling as an exotic option for HPC specialists. In 2026, that framing has collapsed. Single-phase immersion systems reliably support 200 kW per rack. Direct-to-chip cold-plate designs are now standard in every major GPU reference platform. Next-generation AI racks — built around chips like NVIDIA's Blackwell Ultra and Rubin-class accelerators — are specified at 200-250 kW, and hyperscaler roadmaps are already talking about 300-400 kW as the next step.

Air cooling, by contrast, plateaus somewhere in the 30-50 kW range per rack even with aggressive rear-door heat exchangers. Above that, you can move the air faster and chill it harder, but you are fighting physics. As one widely shared summary from Data Center Frontier and Lombard Odier puts it, liquid cooling "is no longer optional for hyperscalers; it is the baseline requirement for any facility that intends to host modern AI workloads. If you cannot support liquid, you cannot support AI."

What Changed the Economics

Three things moved together. First, GPU power density increased faster than anyone planned: an H100 at 700 W was already pushing limits; Blackwell and successor parts run 1-2 kW per socket, and denser boards multiply that. Second, hyperscaler capital plans for 2026-2028 are built on doubling or tripling AI capacity, which means building new facilities rather than retrofitting old ones — and new facilities are greenfield opportunities to build liquid-first. Third, the cost curve on liquid-cooling components has moved the right direction as volume scales, while the energy cost of brute-force air cooling has gotten worse.

The market-size numbers reflect the shift. Analyst estimates put the data-center liquid-cooling market at around USD 6.6 billion in 2026, growing at about 28.7% annually through 2027. Goldman Sachs and TrendForce forecasts cited in industry reports expect liquid-cooled racks to account for between 50% and 76% of all new AI server deployments by the end of 2026 — a range that, regardless of where the true number lands, signals a decisive majority.

Advertisement

Operational Consequences for DC Operators

For operators, this changes the plant more than the racks. A liquid-cooling deployment at scale requires coolant distribution units (CDUs), redundant secondary loops, leak detection, fluid chemistry management and service procedures that legacy air-cooled facilities were never designed around. Raised-floor facilities built in the 2010s frequently lack the structural capacity and under-floor routing to retrofit easily, which is why much of the 2026 build-out is net-new rather than retrofit.

Capex and opex reshuffle accordingly. Liquid-cooling equipment adds upfront cost, but PUE improvements and the ability to run racks at 200 kW+ means the same white-space footprint delivers far more compute. The per-kW cost of delivered compute continues to fall even as per-rack capex rises, which is the economic logic that makes this transition inevitable.

What Enterprise Buyers Should Actually Do

For enterprises buying AI capacity rather than operating the DC themselves, three things follow. First, when evaluating colocation or managed AI infrastructure contracts, liquid-cooling capability is now a basic qualification question, not a nice-to-have — ask what per-rack power density the facility supports today and on the 12-month roadmap. Second, sustainability reporting frameworks increasingly distinguish between air-cooled and liquid-cooled compute for scope-3 and PUE disclosures; your ESG team will notice. Third, on-prem AI clusters still make sense for some regulated workloads, but only if your facilities team can credibly execute a liquid-cooling deployment; otherwise, managed AI services or hyperscaler regions are the pragmatic choice.

The Next Inflection Point

The next generation of accelerators is already pushing discussion past 300 kW per rack, with some roadmaps sketching 1 MW rack designs by the late 2020s. At those densities, two-phase immersion and engineered coolants move from "niche HPC" to mainstream. The operators who complete the single-phase liquid transition well in 2026 will be positioned for the next jump; those still trying to retrofit air-cooled facilities will continue to fall behind on both cost and capacity.

Frequently Asked Questions

Why can't modern AI racks use air cooling?

Air cooling reaches a practical ceiling around 30-50 kW per rack, even with aggressive rear-door heat exchangers and high-velocity airflow. AI racks built around current-generation GPUs (Blackwell-class and successors) are specified at 120-250 kW per rack, with roadmaps pointing to 300-400 kW. Above roughly 100 kW, the physics of moving heat via air become uneconomic and unreliable. Liquid — whether direct-to-chip cold plates or single-phase immersion — moves approximately 1,000 times more heat per unit volume than air.

Is liquid cooling actually safer and more reliable than air?

In modern deployments, yes. Direct-to-chip and single-phase immersion designs include leak detection, sealed loops, and engineered coolants chosen for stability and component compatibility. Many hyperscalers report equal or better uptime on liquid-cooled fleets versus air-cooled legacy capacity. The remaining risks — coolant chemistry management, service procedures, retrofit complexity — are operational challenges that the industry has largely solved at scale.

What does this mean for enterprises that don't run their own datacenters?

The main implication is that colocation and managed-cloud contracts need to include explicit power-density and cooling questions. If your colocation provider cannot support at least 100 kW per rack today and is not on a clear path to 200 kW within the next year, your AI roadmap is constrained regardless of what GPUs you can buy. This is now a basic procurement question for any 2026 infrastructure contract.

Sources & Further Reading

- Liquid Cooling: The 2026 Mandate for 100kW AI Racks — EnkiAI

- Why Liquid Cooling Will Dominate AI Data Centres in 2026 — Lombard Odier

- For AI and HPC, Data Center Liquid Cooling Is Now — Data Center Frontier

- The Boiling Point: Liquid Cooling Becomes the Mandatory Standard as AI Racks Cross 120kW — Financial Content

- Surfs Up! The Liquid Cooling Mandate — AutomatedBuildings