model architecture

AI & Automation

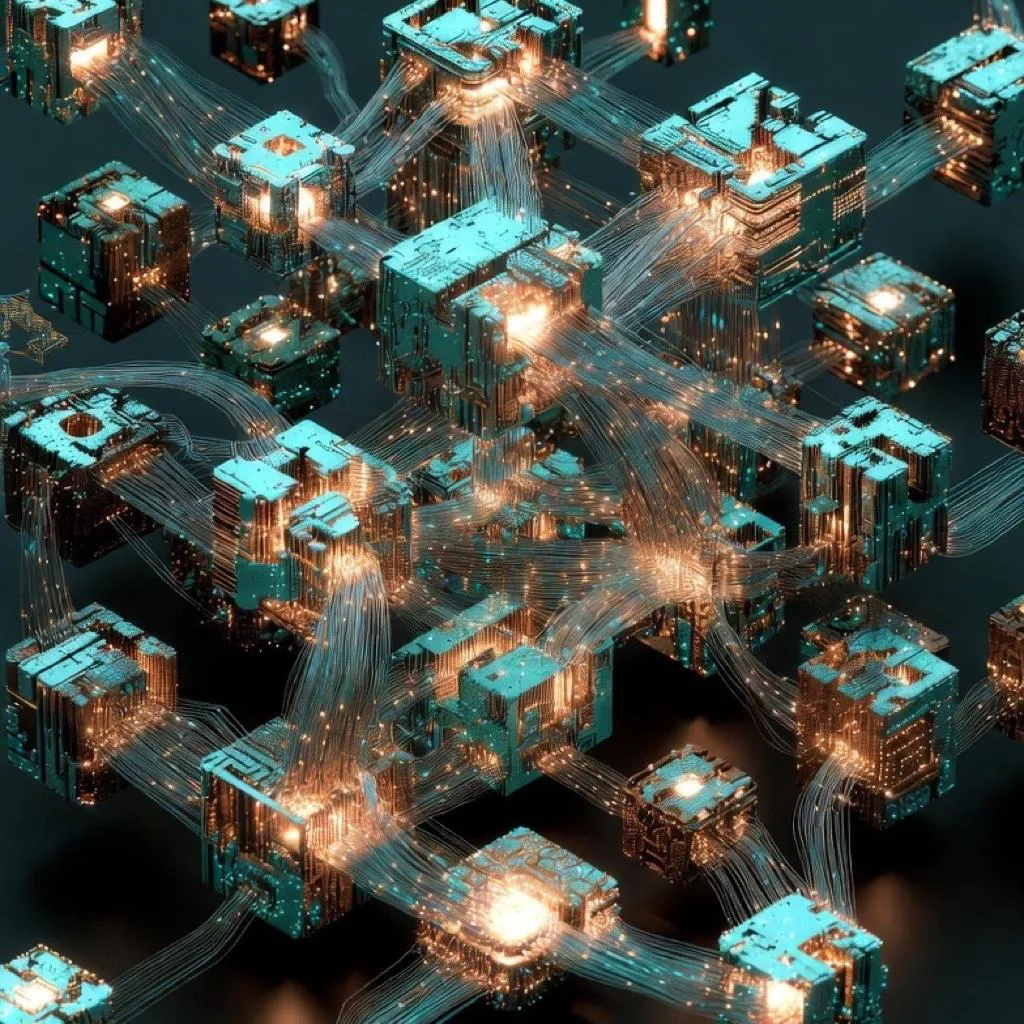

Mixture of Experts: How MoE Architecture Is Making Frontier AI Affordable

ALGERIATECH Editorial

February 27, 2026

GPT-4 is estimated to have around 1.8 trillion parameters. On any single token — one word, one punctuation mark — the vast majority of those parameters sit completely idle, doing nothing.