The Memory Wall That HBM4 Breaks Through

Every AI model — from GPT-5.4 to Gemini 3.1 — runs into the same bottleneck: the GPU can compute faster than memory can feed it data. This “memory wall” means processors sit idle while waiting for the next batch of weights and activations, wasting billions of dollars in silicon. In February 2026, Samsung began shipping the industry’s first commercial HBM4, delivering a generational leap in bandwidth that pushes the wall back significantly.

Built on Samsung’s 6th-generation 10nm-class (1c) DRAM process, HBM4 achieves consistent transfer speeds of 11.7Gbps per pin, with capability up to 13Gbps. With 2,048 data I/O pins — double the 1,024 of HBM3E — a single HBM4 stack delivers up to 3.3TB/s of bandwidth, a 2.7x improvement over its predecessor.

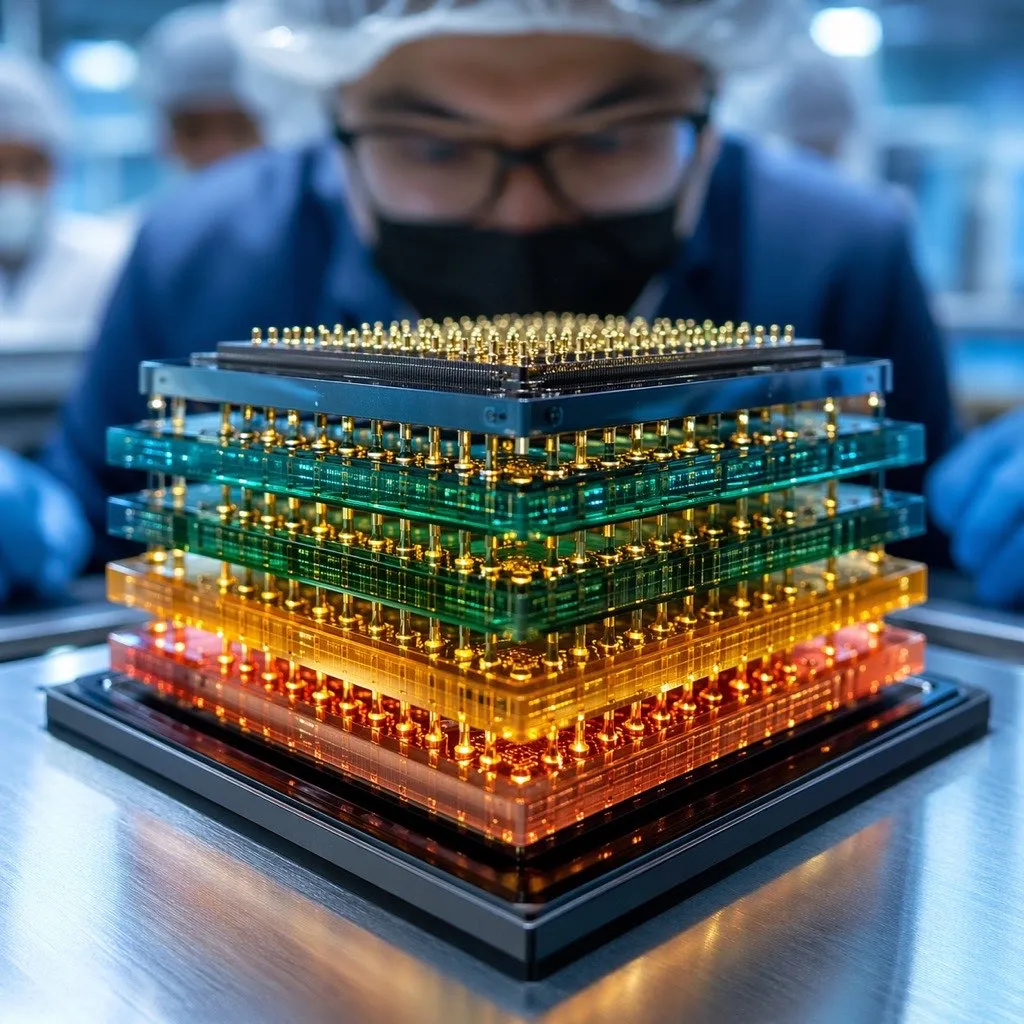

Stacking Higher: From 36GB to 48GB

Samsung’s current HBM4 lineup uses 12-layer stacking technology, offering capacities from 24GB to 36GB per stack. But the real roadmap target is 16-layer stacking, which will push capacity to 48GB per stack. For context, NVIDIA’s current Blackwell B200 GPU uses HBM3E stacks at 24GB each. Doubling that to 48GB per stack means larger AI models can fit entirely in GPU memory without the performance penalty of offloading to slower storage.

The engineering challenge is formidable. Each DRAM die must be thinned to roughly 30 micrometers before stacking — thinner than a human hair — with thousands of through-silicon vias (TSVs) connecting layers vertically. Samsung’s 1c DRAM yields run around 60%, and effective yields drop further after advanced back-end processing. The 16-layer configuration amplifies these challenges, as any defect in any layer can render the entire stack unusable.

Advertisement

Efficiency Gains Beyond Raw Speed

Speed is not the only improvement. HBM4 achieves a 40% improvement in power efficiency over HBM3E through low-voltage TSV technology and power distribution network optimization. Thermal resistance improves by 10%, and heat dissipation by 30%. These numbers matter because HBM stacks sit directly on or adjacent to the GPU die, where temperatures routinely exceed 100 degrees Celsius. Better thermal performance means higher sustained clock speeds and longer component lifetimes.

The Three-Way Race for NVIDIA’s Orders

HBM4 is where Samsung, SK Hynix, and Micron compete most intensely, because NVIDIA is effectively the only customer that matters at scale. SK Hynix currently holds approximately 70% of NVIDIA’s HBM4 allocation for the upcoming Vera Rubin platform, with Samsung capturing roughly 30% — a meaningful comeback after being largely shut out of HBM3E supply to NVIDIA.

Samsung is fighting to close the gap aggressively. The company has passed NVIDIA’s quality testing, begun mass production at its Pyeongtaek campus, and projects that HBM sales will more than triple in 2026 compared to 2025. Samsung also partnered with AMD on next-generation AI memory solutions, diversifying its customer base beyond NVIDIA dependence.

Meanwhile, Samsung is already previewing the next generation. At NVIDIA GTC 2026, the company showcased HBM4E, which delivers 16Gbps per pin and 4.0TB/s bandwidth per stack. HBM4E sampling begins in H2 2026, with custom configurations reaching customers in 2027.

Why HBM4 Matters for AI Infrastructure

The HBM market is experiencing what analysts call an “AI memory supercycle.” SK Hynix forecasts HBM growing 30% annually through 2030, and Micron’s entire HBM capacity is sold out through 2026. The supply-demand imbalance is so severe that gaming GPU production has faced 40% cuts as memory manufacturers prioritize AI chip allocation.

For enterprises building or buying AI infrastructure, HBM4 has immediate implications. Training runs that previously required multi-node setups because model weights exceeded single-GPU memory may fit on fewer GPUs with 48GB HBM4 stacks. Inference workloads benefit even more: higher bandwidth means lower latency per token, directly affecting the cost and speed of every API call to every AI model.

Frequently Asked Questions

What is HBM4 and why does it matter for AI?

HBM4 (High Bandwidth Memory 4th generation) is the latest memory technology designed specifically for AI accelerators. Samsung’s HBM4 delivers up to 3.3TB/s bandwidth per stack — 2.7 times faster than HBM3E — with capacities reaching 48GB through 16-layer stacking. This matters because AI models are increasingly limited by memory bandwidth rather than compute power, and HBM4 directly addresses that bottleneck.

How does Samsung’s HBM4 compare to SK Hynix’s offering?

Samsung shipped the world’s first commercial HBM4 in February 2026, claiming an industry first. However, SK Hynix holds approximately 70% of NVIDIA’s HBM4 allocation for the Vera Rubin platform versus Samsung’s 30%. Both companies are racing to deliver 16-layer configurations, with Samsung already previewing HBM4E (4.0TB/s bandwidth) at GTC 2026. Micron is a distant third competitor with its own HBM4 development underway.

When will HBM4-powered AI hardware be available in cloud?

NVIDIA’s Vera Rubin platform, which uses HBM4 memory, is expected to launch in the second half of 2026. Cloud providers typically need 3-6 months after GPU launch to deploy at scale, meaning HBM4-based cloud instances should become available in early to mid-2027. Samsung’s next-generation HBM4E, with even higher 4.0TB/s bandwidth, will follow in 2027 custom configurations.