In early 2024, a finance employee at a multinational firm based in Hong Kong sat down for what appeared to be a routine video conference. The call included several familiar faces: a senior executive, a few colleagues, and the company’s chief financial officer. The CFO asked the employee to authorize a series of wire transfers totaling $25 million USD. Everything looked and sounded normal.

Every person on that call was a deepfake.

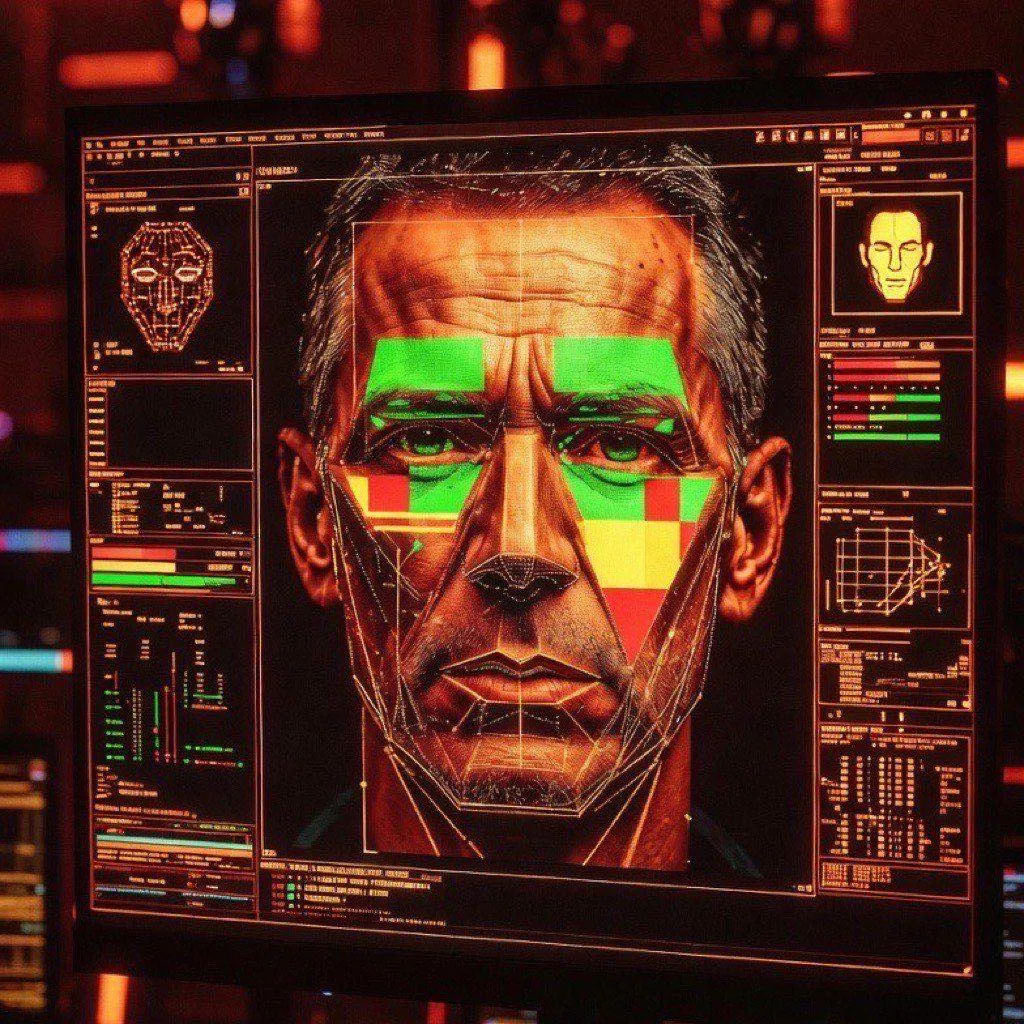

The Hong Kong case, confirmed by local police and widely reported in February 2024, became a landmark in the history of financial crime. It was not the first case of AI-assisted fraud, but it was the most expensive documented instance of a deepfake video call being used to authorize a wire transfer. It proved something the security industry had warned about for years: deepfake CEO fraud is no longer a theoretical threat. It is a documented, repeatable, and scaling attack vector.

What Happened in Hong Kong

The attacker group spent weeks in reconnaissance before the call. They harvested publicly available video footage of the company’s executives — conference recordings, earnings calls, LinkedIn interviews — and fed it into deepfake generation software. The output was convincing enough to fool a trained finance professional across a live video call.

The employee, following what appeared to be a direct instruction from senior leadership, authorized 15 separate transactions across multiple accounts. The total loss was HK$200 million, approximately $25.6 million USD. The attack was not discovered until the employee checked back with headquarters through a separate channel. By then, the money was gone.

Hong Kong police arrested six individuals in connection with the case, but the ringleaders were never publicly identified, and recovery of funds remained partial at best.

How Deepfake CEO Fraud Works

The attack that hit Hong Kong was sophisticated, but the underlying technique is becoming democratized. Deepfake CEO fraud — also known as Business Email Compromise (BEC) evolved with AI augmentation — now operates across three distinct channels:

Video deepfakes use generative AI to animate a target’s face over a live or pre-recorded video feed. Modern tools can do this in real time with as little as a few minutes of source footage. The quality threshold needed to fool someone in a business context — where participants expect minor lag and compression artifacts — is far lower than what most people assume.

Voice cloning has become the more common entry point because it requires less footage and can be deployed over a phone call. A 10-to-30 second audio sample is sufficient for most commercial voice cloning APIs to produce a passable replica. Attackers record executives speaking at public events, in podcast appearances, or in leaked internal recordings, then use the clone to call a finance team member directly.

Text-based BEC remains the baseline: AI-generated emails that impersonate a CEO or CFO, matching writing style, tone, and urgency. Large language models have made generic phishing emails largely obsolete in this category — modern BEC emails are highly personalized and increasingly difficult to flag on style alone.

In many advanced attacks, all three channels are combined in sequence: a spoofed email primes the target, followed by a voice call “confirming” the request, followed by a video call that closes the authorization.

Advertisement

Why This Attack Is Scaling

Several structural forces are amplifying the threat simultaneously.

The cost of producing convincing deepfakes has collapsed. In 2019, a high-quality face-swap required a GPU farm and weeks of training. In 2026, consumer-grade software can produce real-time video deepfakes on a standard laptop. Voice cloning APIs are available via subscription for under $50 per month. The technical barrier is no longer the bottleneck.

Remote work has permanently weakened the informal verification mechanisms that used to exist in physical offices. When a CFO walked down the hall to ask for a wire transfer, the employee could verify identity through multiple sensory channels simultaneously. In a remote-first environment, a video call is the closest available approximation — and attackers are exploiting that gap.

The global expansion of international wire infrastructure means that more businesses, including mid-sized and smaller companies, are now executing cross-border transfers regularly. The attack surface has widened. Sophisticated fraud groups, many of them operating from jurisdictions with limited extradition cooperation, are prioritizing this attack pattern because the expected return per attempt is high and forensic traceability is difficult.

Documented Cases and Financial Scale

Beyond the Hong Kong incident, the pattern is well-established. In 2019, a UK energy company was defrauded of €220,000 after an executive received a phone call from what he believed was his German parent company’s CEO — later confirmed to be a voice clone. That case was among the first documented AI voice fraud incidents and was investigated by Euler Hermes, the company’s cyber insurer.

The FBI’s Internet Crime Complaint Center (IC3) reported that Business Email Compromise losses exceeded $2.9 billion in 2023 across approximately 21,000 complaints filed in the United States alone. AI augmentation of these attacks is now documented in IC3 advisories, and the agency has issued specific warnings about voice cloning and video deepfakes being used to escalate BEC attack success rates.

Europol and Interpol have both published threat assessments noting that synthetic media fraud is moving from nation-state actors into organized criminal networks, driven by the commoditization of the underlying tools.

Defenses That Work

The Hong Kong attack succeeded for a specific reason: the employee had no out-of-band verification protocol in place. Defending against deepfake CEO fraud does not require exotic technology. It requires process discipline.

Out-of-band confirmation is mandatory. Any wire transfer request above a defined threshold — regardless of who appears to be asking — must be confirmed through a second, independent communication channel. If the request arrives via video call, the confirmation call goes to a pre-registered phone number. If the request arrives by email, the verification happens by voice on a number pulled from the internal directory, not from the email signature.

Establish verbal code words. Sensitive internal operations should use pre-agreed challenge phrases that are never transmitted digitally and change on a regular schedule. A deepfake cannot know a code word that was agreed verbally in a physical setting and never recorded.

Enforce dual-authorization on large transfers. No single employee should be able to authorize a wire above a threshold unilaterally, regardless of how the instruction was received. Two independent sign-offs create redundancy that social engineering attacks struggle to defeat simultaneously.

Train finance and treasury staff specifically. Generic cybersecurity awareness training does not cover deepfakes. Finance teams need scenario-based training that includes simulated deepfake calls, voice clone exercises, and clear escalation protocols. The goal is to build skepticism as a reflex, not a policy to be consulted.

Technical controls matter at the perimeter. Email authentication standards (DMARC, DKIM, SPF) eliminate a significant fraction of spoofed sender BEC attempts. Some organizations are evaluating real-time deepfake detection tools for video conferencing platforms, though detection accuracy remains imperfect against high-quality attacks. Behavioral analytics on payment workflows — flagging unusual recipient accounts, unusual transfer sizes, or unusual request sequences — add a layer of friction that stops automated and opportunistic attacks.

The core insight from the Hong Kong case is that the attacker did not defeat technology. They defeated a process. The finance employee followed instructions from what appeared to be authority figures. The absence of a protocol that required independent verification was the actual vulnerability.

In 2026, any organization handling international wire transfers without out-of-band verification for large transactions is operating with a known, exploitable gap. The technology to close that gap costs nothing. It requires only the discipline to implement and enforce it.

Frequently Asked Questions

What is deepfake ceo fraud?

Deepfake CEO Fraud: The Wire Transfer Attack That Changed Business Security covers the essential aspects of this topic, examining current trends, key players, and practical implications for professionals and organizations in 2026.

Why does deepfake ceo fraud matter?

This topic matters because it directly impacts how organizations plan their technology strategy, allocate resources, and position themselves in a rapidly evolving landscape. The article provides actionable analysis to help decision-makers navigate these changes.

How does how deepfake ceo fraud works work?

The article examines this through the lens of how deepfake ceo fraud works, providing detailed analysis of the mechanisms, trade-offs, and practical implications for stakeholders.

Sources & Further Reading

- Hong Kong company loses HK$200 million in deepfake video conference scam — South China Morning Post

- FBI IC3: Criminals Use Generative AI to Facilitate Financial Fraud — FBI Internet Crime Complaint Center

- Facing Reality: Law Enforcement and the Challenge of Deepfakes — Europol

- FBI IC3 2023 Internet Crime Report — FBI Internet Crime Complaint Center

- Voice Deepfakes Are Calling — Krebs on Security