The Energy Problem with Vision-Language-Action Models

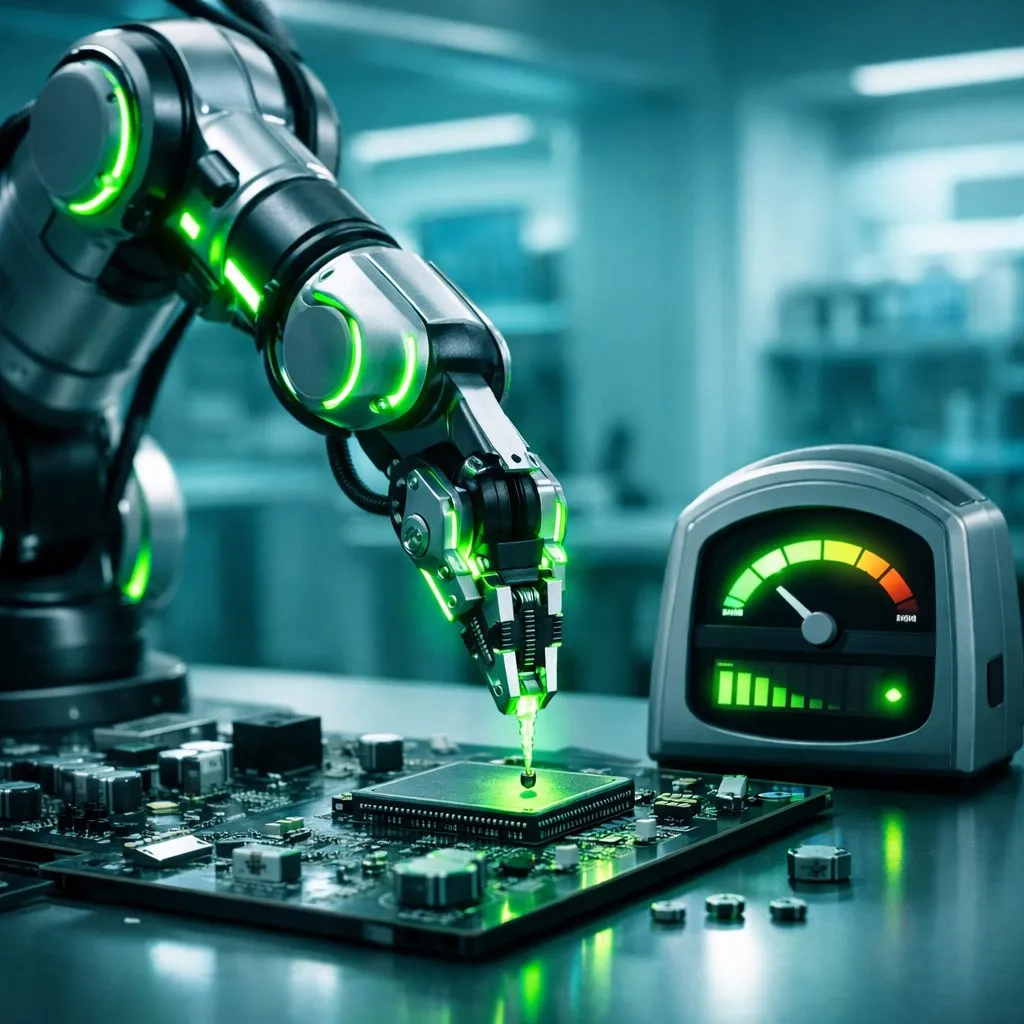

Vision-Language-Action (VLA) models represent the next frontier of AI, extending large language model capabilities into the physical world. Unlike text-only systems such as ChatGPT or Gemini, VLA models ingest visual data from cameras, interpret natural language instructions, and translate both into real-world robotic actions.

But this power comes at a steep cost. Training a standard VLA model for robotic manipulation can consume over 36 hours of GPU time on high-end hardware, translating into massive energy expenditure. As organizations deploy more robotics and embodied AI systems, the energy footprint threatens to become unsustainable. With global AI infrastructure already consuming an estimated 4.3% of worldwide electricity, finding efficiency gains is not optional — it is existential.

The Neuro-Symbolic Breakthrough

Researchers at Tufts University have developed a neuro-symbolic AI architecture that combines classical symbolic reasoning with learned robotic control. Rather than relying solely on pattern recognition from enormous datasets, the system uses abstract rules about shape, balance, and spatial relationships to plan more effectively.

The results are striking. The neuro-symbolic system achieved a 95% success rate on structured manipulation tasks — matching or exceeding standard VLA performance — while consuming just 1% of the training energy. Training required only 34 minutes, compared to more than a day and a half for conventional approaches.

The key insight is that symbolic reasoning eliminates unnecessary trial and error. Instead of learning every edge case from data, the system reasons about physical principles, dramatically reducing the number of training iterations needed.

Advertisement

Implications for the AI Industry

This research reinforces a theme gaining momentum throughout 2026: efficiency innovations may deliver more practical value than raw scaling. While hyperscalers race to secure gigawatts of compute capacity, this work demonstrates that architectural innovation can achieve comparable outcomes at a fraction of the energy cost.

The paper, set to be presented at ICRA 2026 in Vienna this June, arrives as the AI industry faces mounting pressure on energy consumption. Data centers consumed roughly 460 TWh globally in 2025, and projections suggest this could double by 2028 without efficiency gains.

For robotics companies, the implications are immediate. A 100x reduction in training energy means faster iteration cycles, lower operational costs, and the ability to deploy AI-powered robots in energy-constrained environments — from remote warehouses to disaster response scenarios.

VLA Models in the Broader AI Landscape

The ICLR 2026 conference featured extensive research on VLA architectures, reflecting the field’s rapid maturation. A systematic review published in ScienceDirect catalogued the evolution of multimodal fusion approaches for robotic manipulation, highlighting how VLA models are converging language understanding, visual perception, and motor control into unified systems.

Several trends are emerging. First, efficient VLA architectures are becoming a dedicated research subfield, with surveys tracking model compression, distillation, and hybrid approaches. Second, industry adoption is accelerating — manufacturing, logistics, and healthcare are deploying VLA-based robots for tasks ranging from surgical assistance to warehouse picking. Third, the neuro-symbolic approach opens a path for embodied AI in resource-limited settings, including developing nations where energy infrastructure cannot support traditional AI workloads.

What This Means for Deployment at Scale

The practical implications extend beyond energy savings. A 34-minute training cycle versus 36+ hours fundamentally changes the economics of robotic AI. Organizations can afford to fine-tune models for specific environments, retrain on new tasks daily, and maintain fleets of specialized robots without requiring dedicated GPU clusters.

This also shifts the competitive landscape. Startups with limited compute budgets can now compete with well-resourced incumbents on model quality. The democratization of efficient VLA training could accelerate robotics innovation globally, particularly in regions where energy costs and availability are primary constraints.

Frequently Asked Questions

What are Vision-Language-Action models and how do they differ from ChatGPT?

VLA models extend AI beyond text processing into the physical world. While ChatGPT processes text input and generates text output, VLA models combine visual perception from cameras, natural language understanding, and motor control to enable robots to see, understand instructions, and physically act on them. They are the foundation of next-generation robotics.

How does the neuro-symbolic approach achieve 100x energy reduction?

Traditional VLA models learn entirely from data, requiring millions of training examples and hundreds of GPU-hours. The neuro-symbolic approach combines this learning with symbolic reasoning about physical principles like shape, balance, and spatial relationships. This eliminates redundant trial-and-error, reducing training from 36+ hours to just 34 minutes while maintaining 95% task accuracy.

When will energy-efficient VLA models be commercially available?

The research will be formally presented at ICRA 2026 in Vienna in June 2026. Commercial adoption typically lags academic breakthroughs by 12-24 months. Expect early commercial implementations in manufacturing and logistics robotics by mid-2027, with broader deployment following as frameworks mature and open-source implementations emerge.

Sources & Further Reading

- Neuro-Symbolic AI Cuts Robot Energy Use by 100x — Nerd Level Tech

- AI Breakthrough Cuts Energy Use by 100x While Boosting Accuracy — ScienceDaily

- State of Vision-Language-Action (VLA) Research at ICLR 2026 — Moritz Reuss

- Multimodal Fusion with VLA Models for Robotic Manipulation: A Systematic Review — ScienceDirect

- Efficient Vision-Language-Action Models for Embodied Manipulation: A Systematic Survey — arXiv