LLM inference

AI & Automation

TurboQuant: How Google’s KV Cache Algorithm Cuts LLM Inference Memory Costs

ALGERIATECH Editorial

May 25, 2026

⚡ Key Takeaways Google’s TurboQuant compresses LLM KV cache to 3 bits, reducing memory 6× and boosting H100 attention speed...

Infrastructure & Cloud

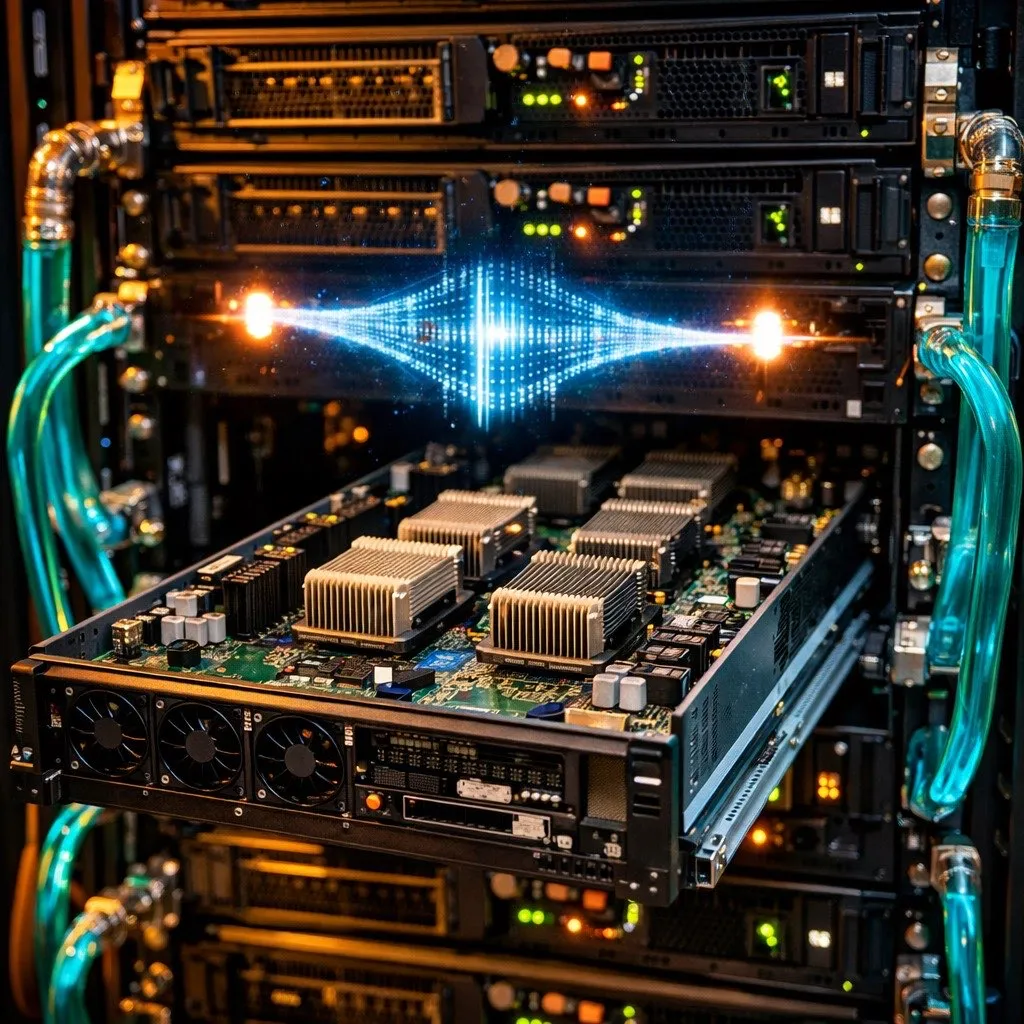

Private AI on Your Own Servers: Why Algerian Enterprises Should Run LLMs On-Premise

ALGERIATECH Editorial

May 9, 2026

⚡ Key Takeaways On-premise LLM inference servers break even against cloud GPU API costs within 4-8 weeks of equivalent cloud...

AI & Automation

TurboQuant: Google’s 3-Bit KV Cache Compression Cuts LLM Memory 6x

ALGERIATECH Editorial

April 12, 2026

⚡ Key Takeaways Google Research’s TurboQuant algorithm compresses the KV cache in LLMs to 3 bits per value, reducing memory...