AI inference

AI & Automation

AI Compute Scaling: Why the Shift from Training to Inference Changes Everything

ALGERIATECH Editorial

March 6, 2026

For three years, the AI industry was obsessed with a single metric: how much compute it takes to train the...

Infrastructure & Cloud

Beyond Nvidia: How Groq and Cerebras Are Redefining AI Inference Speed

ALGERIATECH Editorial

February 10, 2026

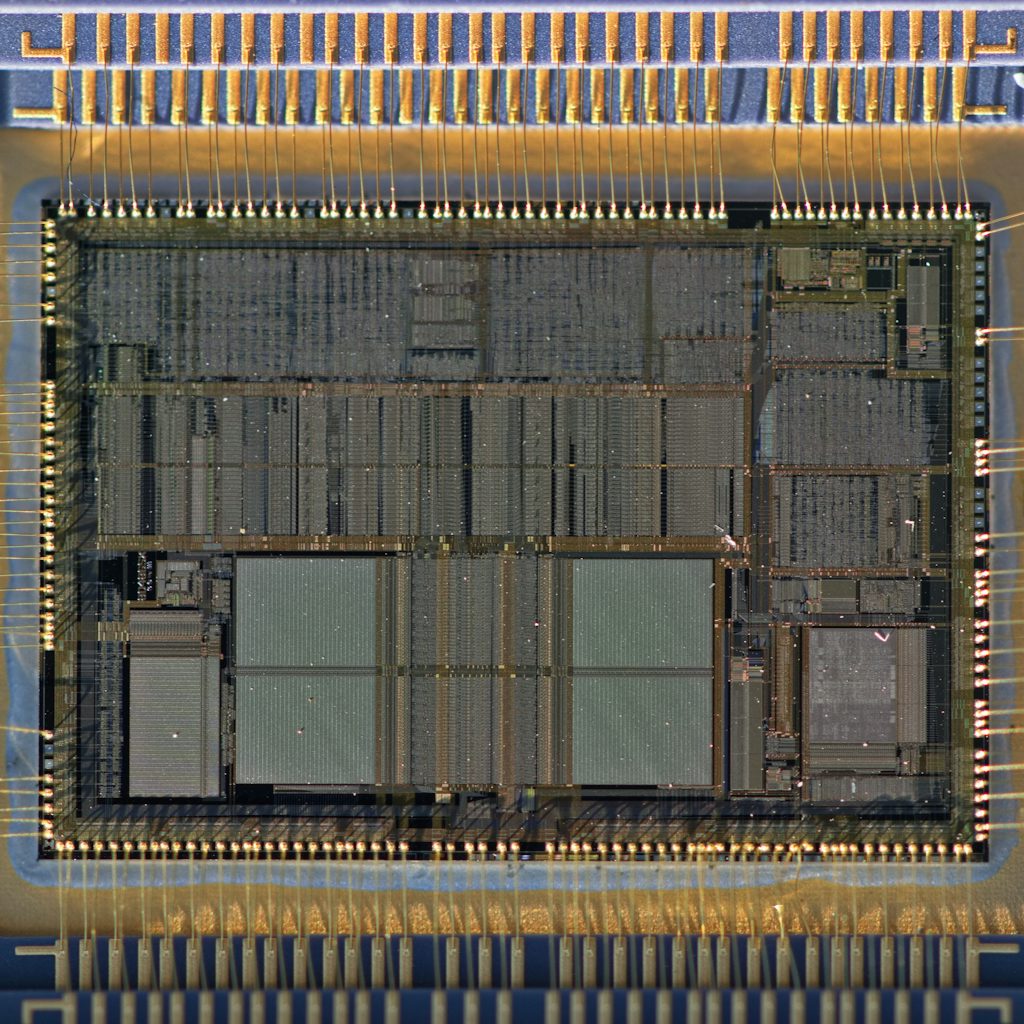

When most organizations think about AI infrastructure, they think about Nvidia. The H100 GPU has become the default unit of AI compute — a $30,000 chip that powers everything from model training at OpenAI to inference pipelines at enterprise software companies.