Most teams using AI tools are stuck in the one-shot prompt era. Someone writes a prompt, gets a decent result, and moves on. Next week, they write a slightly different prompt for the same task and get a completely different result. There is no consistency, no quality tracking, and no accumulation of knowledge.

The numbers confirm the gap. According to McKinsey’s 2025 State of AI report, 88 percent of organizations now use AI in at least one business function, yet only about 6 percent — the so-called “AI high performers” — attribute more than 5 percent of their earnings to AI. The majority are experimenting without scaling. One of the biggest reasons is that most AI usage remains ad-hoc: individual prompts fired at individual problems, with no system to capture what works and discard what does not.

This is how most businesses used spreadsheets in 1995 — individual files, no templates, no formulas, no shared knowledge. It works until it doesn’t, and it doesn’t work at scale.

The shift happening now is from ad-hoc prompting to what the industry increasingly calls context engineering — building structured, tested, versioned AI skill systems that produce consistent results and improve over time. LangChain’s 2025 State of Agent Engineering survey of over 1,300 practitioners found that 32 percent cite output quality as their top production barrier, with most failures traced not to model capabilities but to poor context management. The fix is not better models. It is better systems around those models.

What a Reusable AI Skill Actually Is

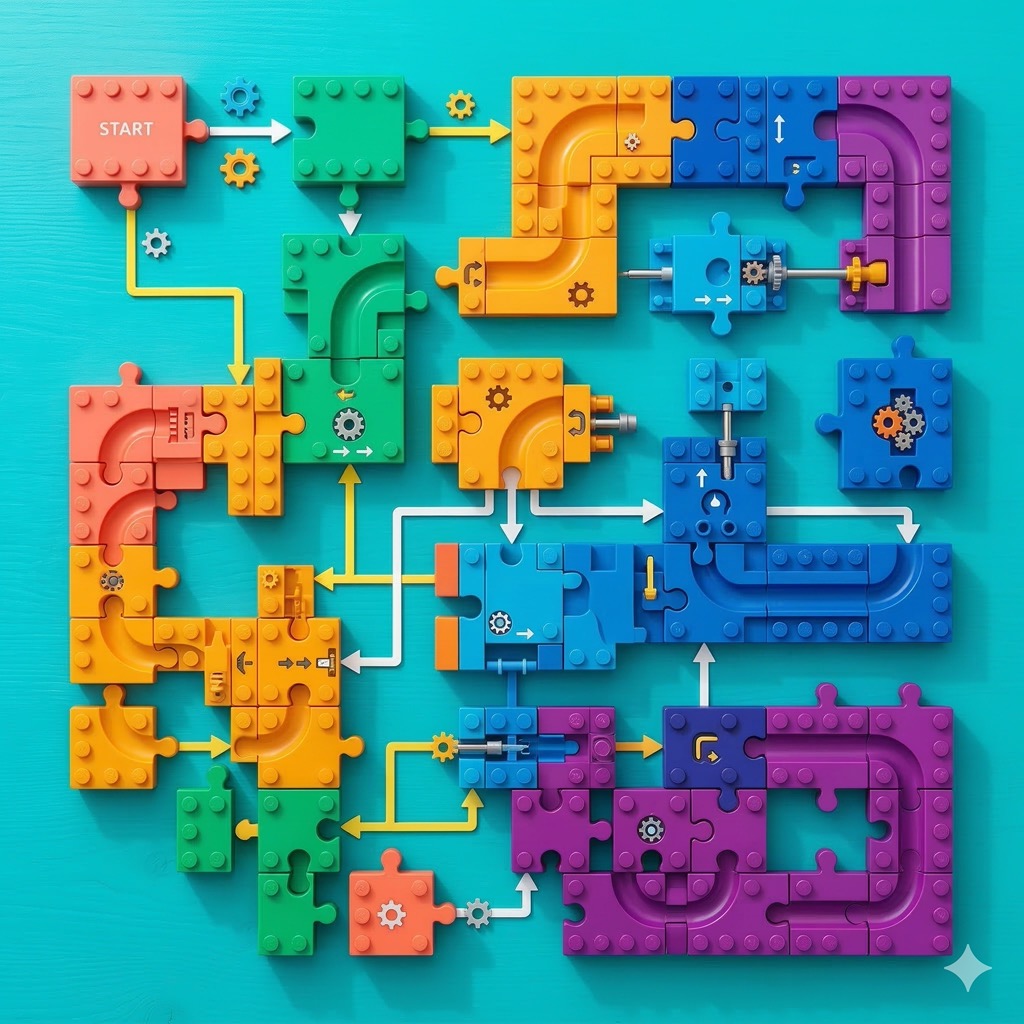

A skill is more than a prompt. It is a package of components that work together to produce reliable output. Think of it as the difference between typing a query into a search bar and building a documented API endpoint. Both get results; only one is production-grade.

The Instruction Set

The core prompt — detailed instructions that tell the AI what to produce, how to structure it, what to include, and what to avoid. Unlike a one-shot prompt, skill instructions are refined through multiple iterations and encode lessons learned from dozens of outputs.

A one-shot prompt might say: “Write a LinkedIn post about automation.”

A skill instruction set says: “Write a LinkedIn post following brand structure rules. First line must be a standalone hook sentence, not part of a paragraph. Include at least one specific number or statistic. Final line must not be a question. Keep total word count under 300. Reference persuasion techniques from the reference file. Use short paragraphs with visual line breaks.”

The difference is specificity, and specificity drives consistency.

Reference Files

Contextual documents that the skill accesses every time it runs. A marketing skill might include a brand tone of voice guide, a persuasion techniques toolkit, and examples of successful posts. A code review skill might include architecture guidelines, naming conventions, and example implementations.

This matters more than most teams realize. Research on institutional knowledge loss shows that 42 percent of organizational knowledge resides solely with individual employees. When those employees leave, the knowledge vanishes. Reference files serve double duty: they make the AI’s output more aligned with organizational expectations, and they document institutional knowledge that would otherwise walk out the door with the next resignation.

The Trigger and Activation Layer

A structured description that tells the AI system when to activate the skill. Modern AI development tools — from Claude Code’s skill system to LangChain-based agent frameworks — read these descriptions to determine relevance. When a user’s request matches the skill’s purpose, the skill activates automatically.

Getting the trigger description right is its own optimization problem. Too broad and the skill fires on irrelevant requests. Too narrow and it misses legitimate use cases. The best triggers describe the outcome, not the method: “Generate a technical blog post following our editorial standards” rather than “Write text about technology.”

The Test Suite

A set of test prompts and assertions that measure output quality. This is what elevates a skill from “a prompt that usually works” to “a tested tool with known reliability.” Tools like Promptfoo (an open-source CLI for evaluating LLM outputs with CI/CD integration) and platforms like Braintrust and LangSmith now make it possible to run structured evaluations against prompt systems — the same way software teams run unit tests against code.

The test suite answers the question: “Is this skill producing quality output right now?” Without one, degradation is invisible until a customer complains.

The Maturity Model: Five Stages of AI Skill Development

Skills evolve through predictable stages. Most organizations are at Stage 1 or 2. The competitive advantage belongs to those who reach Stage 3 and beyond.

Stage 1: The Draft (Version 0.1)

A basic prompt that produces roughly correct output. No reference files, no testing, no trigger optimization. This is where most teams stop — and where most of that 88 percent of AI-adopting organizations currently sit.

Quality: Inconsistent. Works sometimes, fails unpredictably.

Maintenance: None. Nobody knows if quality degrades.

Stage 2: The Refined Prompt (Version 1.0)

The prompt has been manually improved through several iterations. Common failure modes have been addressed with additional instructions. Some reference files have been added for context.

Quality: Better but still variable. Major failure modes are handled; edge cases are not.

Maintenance: Ad-hoc. Someone occasionally notices a problem and fixes it.

Stage 3: The Tested Skill (Version 2.0)

Assertions and a test suite have been defined. Quality is measurable — a score like 21/25 or 24/25 that can be tracked over time. Regressions are detectable. This is the stage where prompt management platforms like PromptLayer and evaluation frameworks like DeepEval start delivering real value, providing version control, automated regression testing, and performance analytics.

Quality: Measurably good. Known weaknesses are documented.

Maintenance: Score-driven. When the score drops, attention is needed.

Stage 4: The Self-Improving Skill (Version 3.0)

Autonomous feedback loops run evaluations against the test suite and refine the skill instructions. LLM-as-judge evaluation — where one AI model evaluates another’s output — has moved from experimental to standard practice, making it possible to run thousands of evaluations overnight without human reviewers.

Quality: High and consistently improving. Approaching ceiling on measurable dimensions.

Maintenance: Minimal for structural quality. Human review focused on creative and contextual dimensions.

Stage 5: The Business Asset (Version 4.0+)

The skill has comprehensive test coverage, documented performance history, version control, and known reliability metrics. It can be shared across teams, compared against alternatives, and treated as organizational intellectual property.

Quality: Production-grade. Reliable enough to trust with customer-facing output.

Maintenance: Systematic. Regular test suite reviews, assertion updates, and autonomous optimization cycles.

Building Skills That Last: Practical Architecture

Start with the Output, Not the Prompt

Before writing a single instruction, define what the output should look like. Collect 5-10 examples of ideal output. Identify the specific characteristics that make them good — structure, length, elements, formatting, tone.

These characteristics become your assertions, which become your test suite, which becomes the measurement system for improvement. This is the same principle behind test-driven development in software engineering, applied to AI outputs.

Encode Organizational Knowledge in Reference Files

Every organization has implicit knowledge that affects output quality — brand guidelines, style preferences, industry terminology, competitive positioning. This knowledge lives in people’s heads until someone encodes it in reference files.

The practical implementation is straightforward. Claude Code’s skill system, for example, stores skills as folders containing a SKILL.md instruction file alongside reference documents, templates, and helper scripts. The skill can access all files in its folder every time it runs. Similar patterns exist in LangChain-based frameworks and enterprise prompt management platforms.

The key insight is that reference files are not just about AI quality — they are a knowledge management system. When a senior team member’s expertise is encoded in skill reference files, that expertise persists even when the person moves on.

Version Everything

Treat skill files like code. Use version control. Never overwrite a working skill — branch it, modify the branch, test the branch, and merge only when the new version scores equal to or higher than the previous version.

Prompt versioning platforms track every change and link it to performance metrics. If version 2.3 of a skill scores worse than 2.2 on your test suite, you roll back. This version control discipline prevents the common failure mode where someone “improves” a skill and inadvertently breaks it for half its use cases.

Design for the 80/20 Rule

A skill does not need to handle every possible input perfectly. Design for the 80 percent of use cases that represent your actual workflow. Document the edge cases that require manual handling rather than trying to encode increasingly complex logic to cover rare scenarios.

The most effective skills are narrow and deep, not broad and shallow. A skill that writes excellent product descriptions for your specific e-commerce category will outperform one that tries to write any type of marketing copy.

Advertisement

The Organizational Impact

From Individual Knowledge to Team Capability

When skills are documented, tested, and shared, an individual’s expertise becomes a team capability. A senior marketer’s copywriting instincts, encoded in a skill’s instructions and reference files, become available to every team member.

This is particularly valuable for onboarding. Instead of months learning organizational style and preferences, new team members access skills that encode those preferences directly. Given that US businesses lose an estimated $31.5 billion annually due to poor knowledge sharing, the return on investing in skill documentation is substantial.

From Inconsistent Quality to Predictable Output

One-shot prompts produce wildly variable output. Tested skills produce predictable output within a known quality range. For businesses that depend on consistent quality — agencies, content teams, customer communication — this predictability is transformative.

McKinsey’s research found that workflow redesign has the single biggest effect on an organization’s ability to capture EBIT impact from generative AI. Skills are the building blocks of that workflow redesign: standardized, testable units of AI capability that can be composed into larger automated workflows.

From Manual Scaling to Automated Scaling

Manual prompt workflows scale linearly: more output requires more human time. Skill-based workflows scale more efficiently: the same skill handles increased volume without proportional human effort. The initial investment in building and testing the skill pays dividends as volume increases.

This is why Gartner predicts that 40 percent of enterprise applications will feature task-specific AI agents by 2026, up from less than 5 percent in 2025. Those agents need reliable, tested skill components to function — not ad-hoc prompts that break under production load.

Common Mistakes to Avoid

Over-Engineering the First Version

The first version of a skill should be simple. Get the basic flow right, define initial assertions, and start iterating. Teams that spend weeks perfecting version 1.0 often discover they were optimizing the wrong things. Ship the draft, measure it, then improve what the data says matters.

Ignoring the Trigger Problem

A skill that produces perfect output is useless if it does not activate when needed. In agent-based systems, trigger reliability is half the battle. Test activation as seriously as output quality — a skill with 95 percent output quality but 60 percent trigger accuracy is effectively a 57 percent reliable system.

Not Testing Against Diverse Inputs

A skill tested against one prompt type will fail on others. Use diverse test prompts that cover the range of real-world inputs the skill will encounter. Promptfoo and similar evaluation tools support parameterized test suites that run the same skill against dozens of input variations automatically.

Treating Skills as Static

Skills need maintenance. Source data changes, organizational preferences evolve, and AI model updates can shift output characteristics. The LangChain survey found that 89 percent of organizations with agents in production have implemented observability — the ability to monitor how skills perform in real time and catch degradation before it reaches customers.

Conclusion

The gap between organizations using one-shot prompts and those building reusable, tested AI skills is widening rapidly. Teams at Stage 1 are doing the same work repeatedly, inconsistently, and without accumulating improvement. Teams at Stage 3 and above are building compounding advantages — skills that improve over time, encode organizational knowledge, and produce reliable output at scale.

The path forward is not technically difficult. It requires discipline: define output requirements as testable assertions, build evaluation suites, use reference files to encode organizational knowledge, version control everything, and iterate systematically. The tools exist today — from open-source frameworks like Promptfoo and DeepEval to commercial platforms like PromptLayer and LangSmith, to native skill systems in tools like Claude Code. The advantage goes to teams that adopt them first.

Frequently Asked Questions

How long does it take to build a reusable AI skill from scratch?

A basic skill (Stage 2) can be built in a single afternoon — write the instruction set, add 2-3 reference files, and test against 5-10 sample inputs. Reaching Stage 3 with a formal test suite typically takes one to two additional sessions of defining assertions and running evaluations. The investment is modest compared to the time saved: a well-built skill eliminates hours of repeated prompt crafting and output correction across every future use.

Do I need expensive tools or platforms to start building AI skills?

No. You can start with free, open-source tools. Promptfoo is a free CLI for evaluating LLM outputs with CI/CD integration. Claude Code includes a native skill system at no additional cost beyond the base subscription. DeepEval provides open-source LLM evaluation similar to unit testing frameworks. Version control through Git is free. Enterprise platforms like PromptLayer and LangSmith add convenience and collaboration features, but the core workflow — write, test, version, iterate — requires no paid tooling.

What is the difference between prompt engineering and building reusable AI skills?

Prompt engineering focuses on crafting a single effective prompt for a specific interaction. Building reusable AI skills is a systems engineering practice: it involves instruction sets, reference files, trigger configurations, test suites, version control, and continuous improvement loops. The industry is increasingly calling this broader discipline “context engineering” — designing the full context that surrounds and informs the AI model, not just the prompt text. A prompt is a sentence; a skill is a tested, maintained software component.

Sources & Further Reading

-

- The State of AI in 2025: Agents, Innovation, and Transformation — McKinsey

- State of Agent Engineering 2025 — LangChain

- 40% of Enterprise Apps Will Feature AI Agents by 2026 — Gartner

- Agentic AI Strategy: Tech Trends 2026 — Deloitte

- The Rise of Context Engineering — LangChain Blog

- Extend Claude with Skills — Claude Code Documentation

- The 5 Best Prompt Versioning Tools in 2025 — Braintrust

- Promptfoo: Test Your Prompts, Agents, and RAGs — GitHub