Table of Contents

- Introduction: Software That Acts

- Layer 1: Foundation Models — The Reasoning Engine

- Layer 2: Orchestration Frameworks — The Nervous System

- Layer 3: Tools and Protocols — The Hands

- Layer 4: Memory and Context — The Brain’s Filing Cabinet

- Layer 5: Evaluation and Guardrails — Quality Control

- Layer 6: Deployment and Operations — Running in Production

- Putting It All Together: A Real-World Agent Architecture

- The Stack Is Still Forming

- Decision Radar

- Sources & Further Reading

Introduction: Software That Acts {#introduction}

Every major computing paradigm has its canonical technology stack. The web had LAMP (Linux, Apache, MySQL, PHP). Mobile had native SDKs and app stores. Cloud-native had Kubernetes, Docker, and microservices.

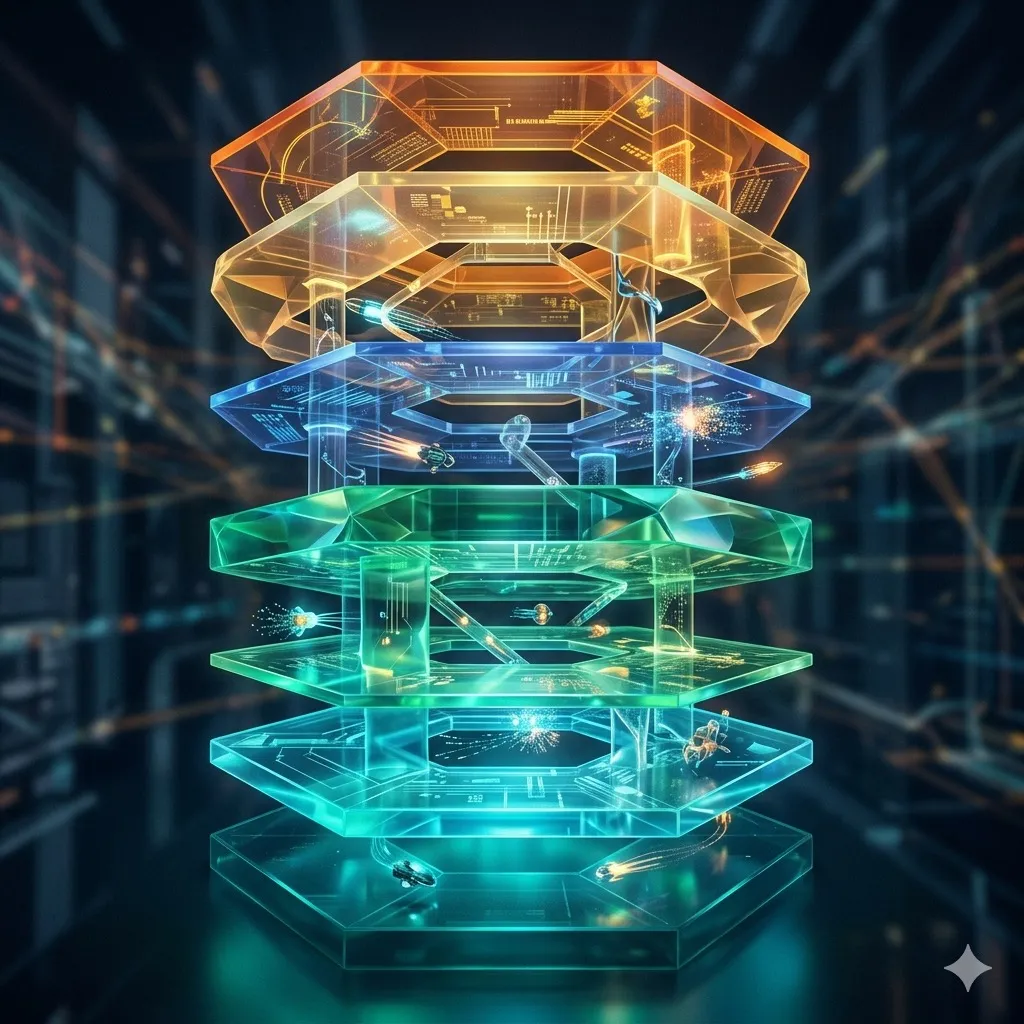

The AI agent era is assembling its own stack — a set of layered technologies that, together, enable software to reason, plan, and act autonomously. Understanding this stack is essential for anyone building, evaluating, or investing in AI-powered systems.

This guide breaks down the agentic AI stack layer by layer, from the foundation models at the bottom to the production operations tooling at the top. Each layer represents a distinct set of technical challenges and a distinct set of vendors competing to dominate it. Together, they form the infrastructure powering the AI revolution.

Layer 1: Foundation Models — The Reasoning Engine {#layer-1}

At the base of every AI agent sits a foundation model. This is the component that understands language, reasons about problems, and generates responses. In 2026, the landscape includes:

Frontier closed-source models: OpenAI’s GPT-4o and o3, Anthropic’s Claude Opus and Sonnet, and Google’s Gemini 2.5 Pro represent the cutting edge. These models excel at complex reasoning, long-context understanding, and tool use — the three capabilities most critical for agentic applications.

Open-source alternatives: Meta’s Llama 4 family uses a Mixture-of-Experts architecture — Maverick activates 17 billion parameters per token from a total of 400 billion, while the Behemoth preview model approaches 2 trillion total parameters. Mistral Large and DeepSeek-V3 (671 billion total parameters, 37 billion active) offer competitive performance for many use cases. The open-source advantage is not just cost — it is control. Organizations can fine-tune, deploy on-premises, and inspect model weights.

Specialized models: Domain-specific models trained on medical, legal, financial, or scientific data consistently outperform general-purpose models in their domains, often at a fraction of the size and cost.

Choosing the Right Model

The most common mistake in agent architecture is over-specifying the model. Not every agent task requires a frontier model. A well-architected system routes simple tasks (classification, extraction, formatting) to smaller, cheaper models and reserves frontier models for genuine reasoning challenges.

This “model routing” pattern has become standard practice. Companies like Martian — which uses mechanistic interpretability to map model capabilities — and Not Diamond — which returns routing recommendations in under 50 milliseconds — offer automated model selection. Not Diamond reports accuracy improvements of up to 25% over any single model by routing each query to the best-suited provider. Most production systems, however, use simpler heuristic routing based on task type and complexity.

Layer 2: Orchestration Frameworks — The Nervous System {#layer-2}

A foundation model alone is a brain in a jar. Orchestration frameworks give it a body — connecting the model to tools, managing conversation flow, handling errors, and coordinating multi-step tasks.

The Framework Landscape

LangChain / LangGraph remains the most widely adopted framework, with over 128,000 GitHub stars as of early 2026. LangGraph, its graph-based orchestration layer, models agent tasks as directed graphs where nodes represent agents or decision points and edges control data flow — supporting conditional branching, parallel execution, and persistent state. Companies including Klarna, Replit, and Elastic use LangGraph for production agent systems.

CrewAI popularized the “crew” metaphor — teams of specialized agents with defined roles collaborating on complex tasks. It operates on a dual architecture: Crews handle autonomous multi-agent collaboration, while Flows provide event-driven workflow orchestration for enterprise environments. CrewAI now supports Google’s A2A protocol for agent interoperability and has grown a certified developer community of over 100,000 developers.

Microsoft Agent Framework is the successor to AutoGen and Semantic Kernel, unifying both into a single production-grade platform. The framework reached Release Candidate status in early 2026, targeting a 1.0 GA release by the end of Q1 2026 with stable, versioned APIs and enterprise readiness certification. AutoGen itself remains maintained for critical bug fixes but is no longer receiving significant new features.

Anthropic’s Claude Agent SDK (renamed from the Claude Code SDK) provides thin abstractions over Claude’s native tool use and extended thinking capabilities. The SDK powers Claude Code itself and is available in both Python and TypeScript. Its philosophy: the model should do the orchestration, not the framework.

The Orchestration Paradox

More sophisticated orchestration does not always produce better results. Research has consistently shown that adding more agents increases coordination overhead and error propagation. The best agent architectures use the simplest orchestration that achieves the goal.

A single agent with good tools and clear instructions outperforms a multi-agent system for the vast majority of real-world tasks. Multi-agent architectures should be reserved for genuinely parallel workloads — like searching multiple data sources simultaneously or processing documents in different languages.

Layer 3: Tools and Protocols — The Hands {#layer-3}

Agents are only as useful as the tools they can access. In 2026, two approaches dominate how agents interact with external systems.

The Model Context Protocol (MCP)

MCP has emerged as the de facto standard for agent-tool communication. Introduced by Anthropic in late 2024, MCP defines a universal interface that any tool provider can implement, allowing any MCP-compatible agent to discover and use the tool automatically.

Think of MCP as USB-C for AI. Before USB, every peripheral needed its own connector and driver. Before MCP, every agent-tool integration required custom code. MCP standardizes tool discovery (what tools are available?), tool description (what does this tool do and what parameters does it need?), and tool invocation (how do I call it and get results back?).

By March 2026, over 1,800 MCP server implementations have been cataloged — covering databases, APIs, file systems, cloud services, and enterprise applications. Major platforms including Cursor, Claude Code, Replit, Sourcegraph, Visual Studio Code, and ChatGPT have adopted MCP as their primary agent-tool interface.

Computer Use: The GUI Fallback

Not everything has an API. For legacy systems, internal tools, and web applications that lack programmatic interfaces, computer use — AI agents that interact with graphical user interfaces — provides a powerful fallback.

Computer use agents operate by taking screenshots, identifying interface elements, and simulating mouse clicks and keyboard inputs. Anthropic’s Claude computer use, OpenAI’s CUA (Computer-Using Agent, now integrated into ChatGPT as “agent mode”), and Google’s Project Mariner — which scored 83.5% on the WebVoyager benchmark and can run 10 tasks in parallel — represent different approaches to this challenge.

The technology works well for structured workflows (fill out this form, navigate to this page, click this button) but remains brittle for exploratory tasks that require visual judgment. In practice, computer use is best deployed as a bridge — a way to automate legacy processes while APIs are being built.

Advertisement

Layer 4: Memory and Context — The Brain’s Filing Cabinet {#layer-4}

An agent that forgets everything between conversations is limited to simple, one-shot tasks. Persistent memory — the ability to maintain and retrieve information across sessions — is what transforms a chatbot into a capable assistant.

Types of Agent Memory

Short-term memory (conversation context): The current conversation history. Limited by the model’s context window (up to 1-2 million tokens for frontier models in 2026, though practical limits are lower).

Working memory (scratchpad): Temporary notes the agent creates during a complex task — intermediate results, partial plans, hypotheses being tested. This is typically maintained in the prompt or a lightweight key-value store.

Long-term memory (persistent knowledge): Facts, preferences, and past interactions stored in a vector database or structured knowledge base. The agent retrieves relevant memories at the start of each conversation.

Episodic memory (experience): Records of past task executions — what worked, what failed, and why. This enables agents to learn from experience and avoid repeating mistakes.

The RAG Pattern

Retrieval-Augmented Generation (RAG) remains the dominant approach to grounding agent responses in verified information. The pattern is conceptually simple: before generating a response, the agent searches a knowledge base for relevant documents and includes them in its context.

In practice, RAG is deceptively complex. Document chunking strategy, embedding model selection, retrieval ranking, and context window management all significantly impact quality. The emerging best practice is “agentic RAG” — where the agent actively decides what to search for, evaluates whether the retrieved information is sufficient, and iterates until it has enough context to respond confidently.

Layer 5: Evaluation and Guardrails — Quality Control {#layer-5}

The difference between a demo and a production system is evaluation. LLM evaluation — systematic testing of agent outputs against expected results — has become a distinct engineering discipline.

Evaluation Frameworks

Production agent systems typically maintain evaluation suites that test:

- Accuracy: Does the agent give correct answers to known questions?

- Consistency: Does it give the same answer to the same question asked differently?

- Safety: Does it refuse harmful requests and avoid generating toxic content?

- Tool use correctness: Does it call the right tools with the right parameters?

- Latency and cost: Does it meet performance budgets?

Tools like Braintrust (which raised $80 million in February 2026 at an $800 million valuation), RAGAS (which pioneered reference-free evaluation for RAG pipelines), and DeepEval (an open-source framework offering 50+ LLM-evaluated metrics) have standardized these evaluation patterns. The most mature organizations run evaluation suites as part of their CI/CD pipeline — every model update, prompt change, or tool modification triggers a full regression test.

Alignment and Safety

Beyond functional correctness, agents must be aligned with their operator’s intentions. This is particularly critical for agents with real-world impact — those that can send emails, execute trades, modify code, or interact with customers.

The standard safety architecture involves:

- Input guardrails: Filter and validate user requests before they reach the model

- Output guardrails: Screen model responses for policy violations, PII leakage, or harmful content

- Action guardrails: Require human approval for high-stakes actions (financial transactions, data deletion, external communications)

- Monitoring: Log all agent actions and flag anomalous behavior for human review

Layer 6: Deployment and Operations — Running in Production {#layer-6}

The final layer of the stack covers how agents run in production environments.

Inference Infrastructure

Running foundation models at scale requires specialized infrastructure. The choice between self-hosted (on your own GPUs), cloud-hosted (via API providers like OpenAI, Anthropic, or Google), or hybrid approaches depends on volume, latency requirements, and data sensitivity.

For most organizations, API-based deployment is the pragmatic choice. The API providers handle model serving, scaling, and updates. The trade-off is vendor dependency and potentially higher per-query costs at scale.

Observability and Monitoring

Agent systems are inherently non-deterministic — the same input can produce different outputs. This makes traditional monitoring approaches (check if the response matches the expected output) insufficient.

Production agent monitoring focuses on:

- Trace logging: Recording every step of the agent’s reasoning chain, including tool calls, intermediate outputs, and decision points

- Anomaly detection: Flagging unusual patterns — unexpected tool calls, unusually long response times, repeated errors

- Cost tracking: Monitoring token usage and API costs per agent, per task, and per user

- User feedback loops: Capturing explicit (thumbs up/down) and implicit (task completion, retry rate) user signals

Platforms like LangSmith (tightly integrated with the LangChain ecosystem, supporting Python, TypeScript, Go, and Java SDKs), Arize (which has expanded from traditional ML monitoring into LLM observability through its Phoenix open-source library), and Helicone (an open-source platform that has processed over 2 billion LLM interactions, recently acquired by Mintlify) have built specialized observability tools for this purpose.

Putting It All Together: A Real-World Agent Architecture {#putting-it-together}

Here is what a production-grade AI agent system looks like in practice, using a customer support agent as an example:

1. Request arrives: A customer asks “Why was I charged twice for my last order?”

2. Orchestration (LangGraph): The framework routes the query through a decision tree. Is this a billing question? Does it require order lookup? Should it escalate to a human?

3. Memory retrieval: The agent checks its persistent memory. Has this customer contacted support before? What is their account history?

4. Tool use (MCP): The agent calls the order management API to look up the customer’s recent transactions. It finds two charges for the same order.

5. Reasoning (Claude/GPT-4o): The model analyzes the transaction data, identifies that the duplicate charge was a payment gateway timeout that triggered a retry, and drafts a response.

6. Guardrails: The output filter checks the response for policy compliance, PII exposure, and tone. The action filter confirms that issuing a refund does not exceed the agent’s authorization level.

7. Response: The customer receives a clear explanation and a confirmation that the duplicate charge has been refunded.

8. Monitoring: The entire interaction is logged — reasoning trace, tool calls, response time, token count. The evaluation system scores the response against quality benchmarks.

This entire sequence typically completes in 3-8 seconds — faster than most human agents, and available 24/7.

The Stack Is Still Forming {#stack-forming}

The agentic AI stack in 2026 resembles the web development stack circa 2005 — the foundational pieces exist, but standards are still being established, best practices are still emerging, and today’s dominant frameworks may be tomorrow’s legacy systems.

Several open questions will shape the stack’s evolution:

Will orchestration move into the model? As models become better at planning and tool use natively, the need for external orchestration frameworks may diminish. Anthropic’s approach — minimal framework, maximum model capability — suggests this direction.

Will MCP become the universal standard? MCP has strong momentum and broad adoption, but Google’s A2A (Agent-to-Agent) protocol — now at version 0.3, open-sourced under the Apache 2.0 license, and governed by the Linux Foundation — represents a complementary vision focused on agent-to-agent communication rather than agent-to-tool interaction. The two protocols may end up coexisting rather than competing, addressing different layers of the agent ecosystem.

Will agents need their own operating systems? The transition from individual agents to coordinated agent systems suggests a need for AI operating systems — platforms that manage agent lifecycles, resource allocation, and inter-agent communication. This is where the AI revolution is heading.

What is clear is that the stack is becoming more standardized, more robust, and more accessible. Building a production AI agent in 2026 no longer requires deep ML expertise — it requires systems engineering, good evaluation practices, and a clear understanding of which layers to build versus buy.

Frequently Asked Questions

What does “The Agentic AI Stack” mean?

The Agentic AI Stack: How Autonomous AI Systems Are Built covers the essential aspects of this topic, examining current trends, key players, and practical implications for professionals and organizations in 2026.

Why does the agentic ai stack matter?

This topic matters because it directly impacts how organizations plan their technology strategy, allocate resources, and position themselves in a rapidly evolving landscape. The article provides actionable analysis to help decision-makers navigate these changes.

How does introduction: software that acts {#introduction} work?

The article examines this through the lens of introduction: software that acts {#introduction}, providing detailed analysis of the mechanisms, trade-offs, and practical implications for stakeholders.

Sources & Further Reading

- LangChain Documentation and Architecture Guide — LangChain

- The Model Context Protocol Specification — Anthropic

- Building Effective Agents — Anthropic Research

- AutoGen: Enabling Next-Gen Multi-Agent Applications — Microsoft Research

- Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks — Lewis et al.

- A Survey on Large Language Model-Based Autonomous Agents — Wang et al.

- The Shift from Models to Compound AI Systems — Berkeley AI Research